The Open Book Test

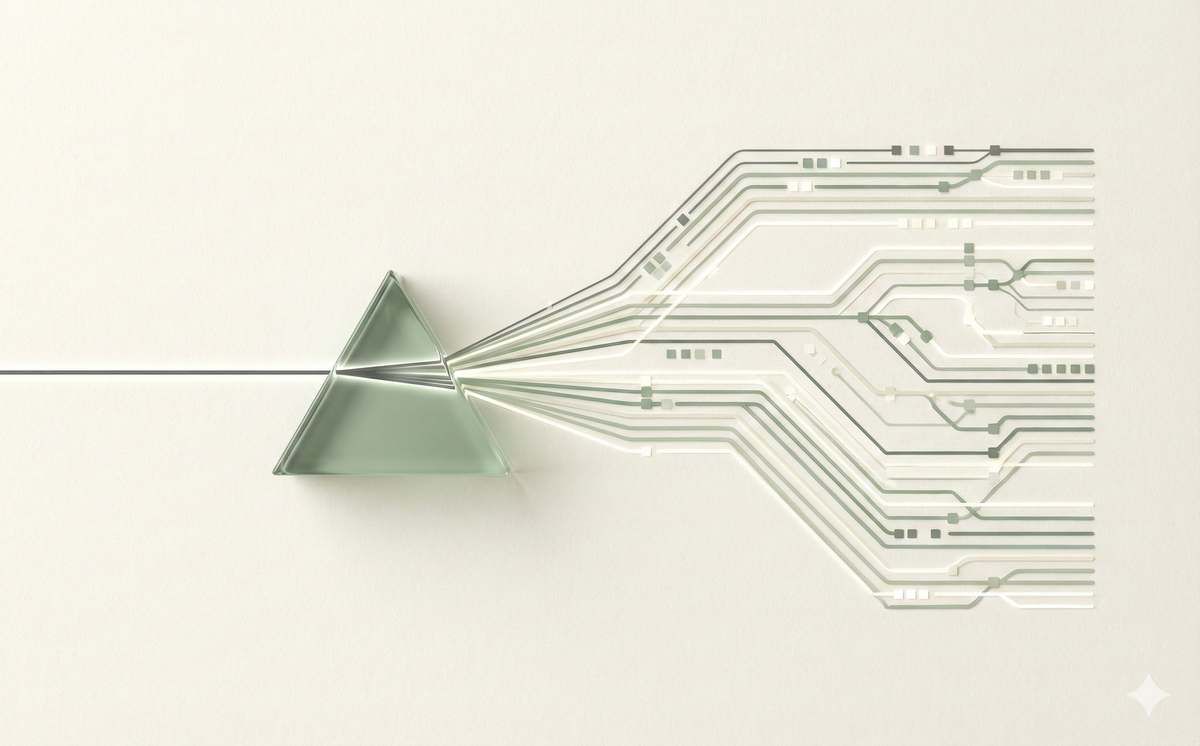

Why the smartest AI isn't the one with the biggest brain, but the one with the best notes. A plain English guide to RAG.

The Open Book Test: How AI Actually "Knows" Things

Here is a terrifying thought: ChatGPT doesn't know anything about you.

It doesn't know your company's revenue. It doesn't know your refund policy. It doesn't know that you specifically asked for the report in PDF format, not Excel.

If you ask it a question about something that happened after it was trained, or something private to your life, it does one of two things:

- It admits defeat: "I don't have that information." (Boring, but safe).

- It lies: It confidently invents a fact because it sounds plausible. (Dangerous).

We call this "Hallucination," but a better word is confabulation. It’s like asking a student a question on a history test they didn't study for. They don't want to leave it blank, so they make up an answer that sounds right.

But wait—if AI doesn't know anything private, how do tools like Perplexity, ChatPDF, or your company's internal AI bot answer questions about your documents?

They cheat.

They turn the exam into an Open Book Test.

The "Open Book" Cheat Code

In the industry, this is technically called RAG (Retrieval-Augmented Generation), but let's just call it what it is: Cheating.

It is the difference between memorizing a textbook and looking at a textbook.

1. The Closed Book (Standard AI)

Imagine you are seated in an empty room. I slide a piece of paper under the door that says: "What is the refund policy for Order #882?"

You have no idea. You might guess: "Standard refund policy is 30 days?" That's a hallucination. You are relying on your internal memory, and your memory is empty.

2. The Open Book (RAG)

Now imagine the same room. But this time, before I ask the question, I slide a dossier under the door. It contains your company's entire handbook and the last 50 emails.

Then I ask: "What is the refund policy for Order #882?"

You don't need to memorize anything. You just look at the dossier, find "Order #882," read the line about refunds, and write the answer.

It is the process of finding the right "cheat sheet" (Retrieval) and handing it to the AI alongside the question (Generation).

Why This Changes Everything

Once you understand this "Open Book" concept, you realize that Model Intelligence (how smart the brain is) matters less than Context Quality (how good the notes are).

You don't need a PhD-level genius to look up a phone number. You just need someone who can read a phone book.

This explains why a massive, expensive model might fail to answer a question about your business, while a tiny, cheap model (that has access to your files) gets it perfectly right.

The tiny model has the book open.

Quick Decision Guide: Context Window or RAG?

| Task | Method | Why? |

|---|---|---|

| Summarizing one long PDF | Context Window (Upload it) | It fits in memory. Let the AI read the whole thing. |

| Searching 10,000 company docs | RAG (Search it) | Too big for memory. AI needs to search for relevant pages first. |

| Fixing a specific code file | Context Window (Paste it) | AI needs to see the whole file to spot bugs. |

| Asking about company HR policy | RAG (Search it) | You don't want to paste the whole handbook every time. |

3 Ways to Cheat on the Test (Manual RAG)

You don't need to be a software engineer to use this. You can do Manual RAG in any chat window right now.

Here are three ways to abuse the Open Book test to your advantage:

1. The "Ctrl+F" on Steroids

Standard "Search" (Ctrl+F) is dumb. It only finds exact word matches. If you search for "Billing" but the contract says "Invoicing," you find nothing.

AI searches for meaning.

The Play: Paste a dense 50-page PDF (or use the "Upload" button).

Prompt: "I am pasting a rental agreement. Find every clause that mentions money, fees, or penalties. Quote them directly."

It doesn't matter if the contract calls it a "Deposit" or a "Retainer"—the AI finds the concept, not just the keyword.

2. The "Style Thief"

Every time you ask AI to "write like me," it fails. Why? Because it hasn't read your diary.

Stop describing your voice. Show it.

The Play: Paste three of your best emails.

Prompt: "Read these three emails to understand my tone (short, punchy, no jargon). Now, look at this fourth bulleted list of facts. Write a specific email using those facts, but in the exact style of the previous three emails."

You aren't asking it to be creative. You are asking it to copy-paste your personality.

3. The "Reality Check"

This is the most powerful use case of all: Fact Checking.

If you ask AI to write a bio for you, it might invent a degree from Oxford. But if you paste your resume, it stays grounded.

The Play: Paste a source document (e.g., a news article or a policy doc).

Prompt: "I am going to show you a news article. Based only on this text, answer the following question: [Question]. If the answer is not in the text, say 'I don't know.' do not guess."

This "Grounding" technique forces the AI to turn off its imagination and turn on its reading glasses.

The Counterpoint: Is this really RAG?

Technically? No.

A purist engineer would tell you that "Real RAG" involves complex database searching. What we are doing here is just "Stuffing the Context Window."

And honestly? They are right.

But the result is identical. Whether a robot finds the document or you upload it yourself, the mechanism is the same: The AI is answering based on what it is looking at, not what it remembers.

The Takeaway

Stop trusting the AI's memory. Its memory is blurry, outdated, and prone to making things up.

Instead, trust its reading comprehension.

If you want the truth, don't ask the AI to recall it. Give the AI the document, the data, or the context.

Always let it cheat.

Want to keep learning?

Get our free AI Starter Kit — 5 lessons delivered to your inbox.

Join readers learning AI in plain English. No spam, ever.